The Broadcasting Arms Race

Live sports broadcasting in 2026 is no longer just about switching camera angles. Networks and Media companies are engaged in a massive technological arms race to capture viewer attention through Augmented Reality (AR) overlays, real-time player velocity metrics, and automated highlight generation.

When an NFL wide receiver runs a post route, viewers expect to see his exact trajectory, speed, and separation distance painted directly onto the grass in real-time.

While graphics engines like Unreal Engine and Vizrt can render these visuals beautifully, they rely entirely on the data fed to them by Computer Vision (CV) tracking models. If the underlying machine learning model lags or misidentifies a player, the broadcast graphic drifts off the field, instantly destroying the live viewing experience.

Achieving 0-latency, broadcast-quality AI tracking requires solving two massive data bottlenecks: Semantic Segmentation and Multi-Camera Synchronization.

To deploy live AR routes and velocity stats, broadcast networks require ultra-low latency computer vision models trained on flawless Ground Truth data.

To deploy live AR routes and velocity stats, broadcast networks require ultra-low latency computer vision models trained on flawless Ground Truth data.

1. Semantic Segmentation: Separating the Athlete from the Noise

The most complex task in broadcast AR is physically placing a graphic behind a player but in front of the grass. This creates a 3D depth illusion on a 2D screen.

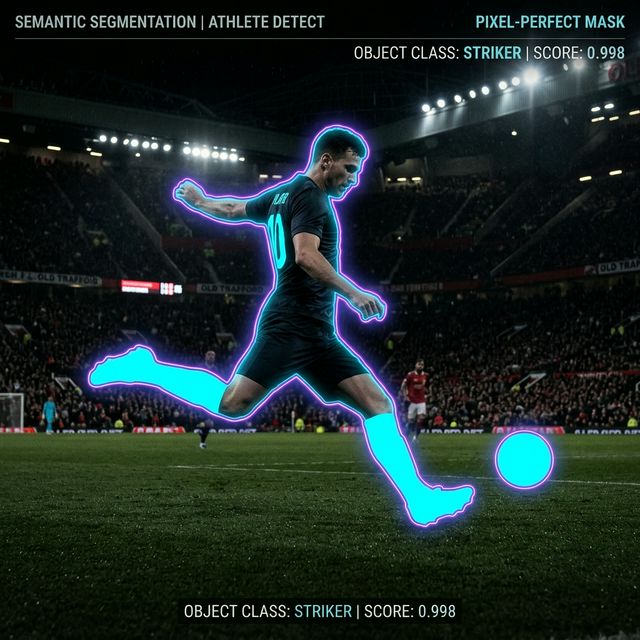

To teach an AI to do this in real-time, the model must understand exactly which pixels belong to the athlete and which belong to the stadium. This requires Instance or Semantic Segmentation.

The Annotation Challenge

Unlike standard bounding boxes (which are simple squares), semantic segmentation requires data annotators to draw a hyper-precise polygon outline around every single contour of the player's body—fingers, shoelaces, helmets, and loose jerseys.

A single frame can take minutes to annotate perfectly. In broadcast sports, where camera angles change dynamically and motion blur is rampant, generating enough pixel-perfect segmentation masks to generalize an ML model is a monumental logistical challenge.

If the segmentation data is poorly annotated (e.g., the mask chops off part of the player's arm), the final broadcast AI model will glitch, and the AR graphic will bleed right over the player's limb on live television.

Pixel-perfect semantic segmentation masks are required to render AR graphics cleanly behind athletes in real-time.

Pixel-perfect semantic segmentation masks are required to render AR graphics cleanly behind athletes in real-time.

2. Multi-Camera Synchronization and 3D Traps

Traditional tracking models run object detection (bounding boxes) on a single 2D camera feed. However, modern broadcast analytics must calculate true 3D spatial data—like the exact height of a basketball during a buzzer-beating shot—to generate volumetric graphics.

To calculate 3D depth, the computer vision system must triangulate the ball's position using data from 4, 8, or even 12 synchronized cameras placed around the arena.

The Annotation Challenge

Training a multi-camera AI requires temporally synced multi-view annotation.

A human data expert isn't just labeling one video; they are scrubbing through 8 simultaneous video arrays. They must ensure that the bounding box on Player #7 in Camera A is perfectly linked with the bounding box on Player #7 in Camera C.

If the sync drifts by even a few milliseconds, the triangulation fails, and the 3D model collapses.

Annotating multi-camera setups requires extreme temporal precision to calculate genuine Volumetric 3D tracking data.

Annotating multi-camera setups requires extreme temporal precision to calculate genuine Volumetric 3D tracking data.

Why Broadcast Networks Cannot Rely on Auto-Labeling

When dealing with billion-dollar broadcasting rights, there is zero tolerance for error.

Many Media companies attempt to bootstrap their CV models using open-source auto-labelers. While these tools speed up initial ingestion, they fundamentally struggle with the "Broadcast Edge Cases": overlapping players, deep stadium shadows, confetti drops, and sudden camera flashes.

Auto-labelers generate "noisy" data. A model trained on noisy data requires more milliseconds to process live inference, killing the 0-latency requirement of live TV. A model trained on pure, human-verified data runs leaner, faster, and more confidently.

Elevating Broadcast Models with Train Matricx

For Broadcast and Media companies building the future of live spectator sports, data quality is not an option—it is a strict requirement.

This is why leading networks partner with Train Matricx. We are not a crowdsource factory; we are a dedicated, highly trained operational workforce specializing exclusively in complex computer vision pipelines. When your engineers need 500,000 frames of temporally synchronized, multi-camera semantic segmentation, our managed teams deliver the 99.8% precision required to get your graphics onto live television without a glitch.

Ensure your broadcast isn't ruined by algorithmic drift.

If you are leading an AI or Analytics team at a Broadcast Media company, Book a Technical Consultation with Train Matricx today to discuss your data pipeline.

Authored By

Asif Ikbal